|

10/26/2021 0 Comments Docker For Mac Kubernetes Dashboard

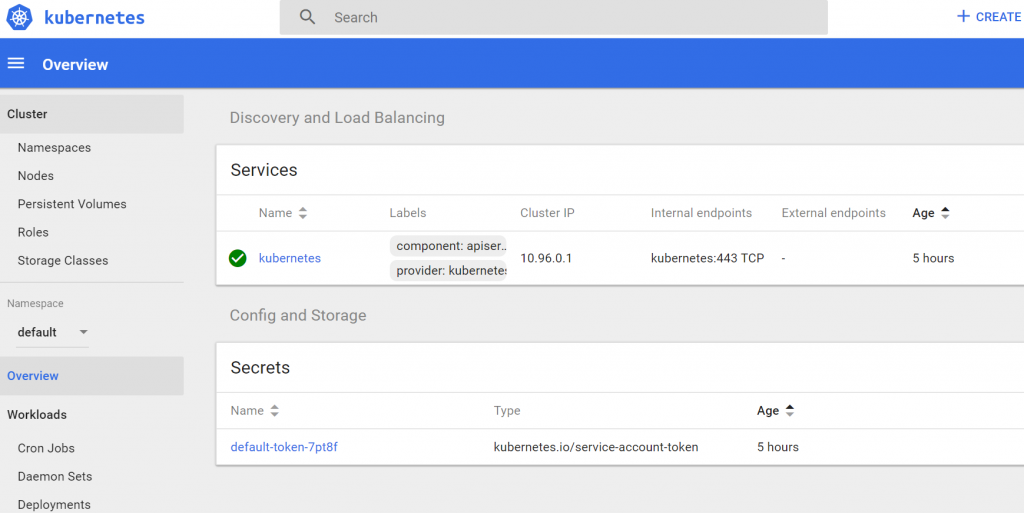

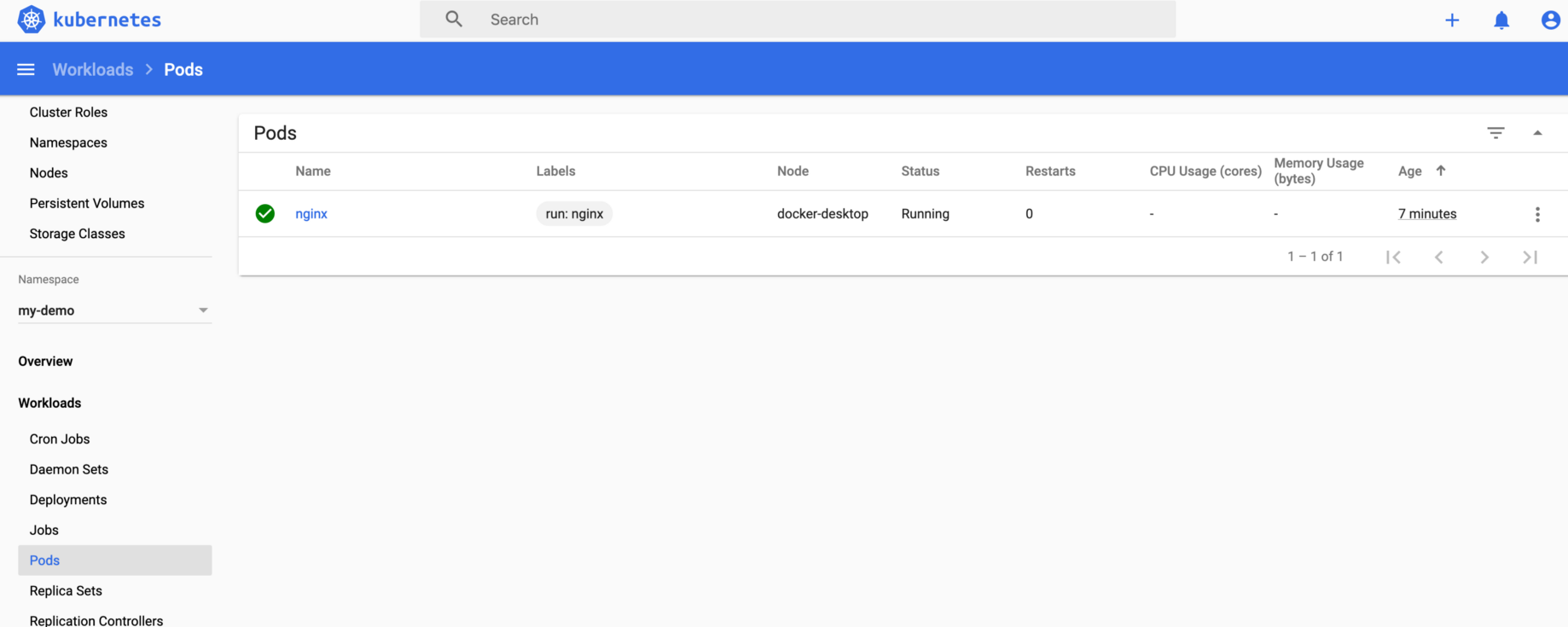

Our goal is to move this to a. Docker Desktop for Mac/Windows Kubernetes English : Docker Desktop Mac Windows Docker CE master Docker for Mac/Windows 4.0.0 ( Docker CE 20.10.8 Kubernetes 1.21.4) The double ‘dags’ in the last line is so that it mirrors the layout of our airflow-dags repository, and weflow imports work correctly. You can use Dashboard to get an overview of applications running on your cluster, as well as for creating or modifying individual Kubernetes resources (such as Deployments, Jobs. You can use Dashboard to deploy containerized applications to a Kubernetes cluster, troubleshoot your containerized application, and manage the cluster resources.You can check the namespaces with curl Delete every Docker containers # Must be run first because images are attached to containers docker rm -f $(docker ps -a -q) # Delete every Docker image docker rmi -f $(docker images -q) Of course you don't want to do this if you're using Docker across multiple projects - you'll find yourself in a world of hurt if you break your other images. In the current version of Docker Desktop for Mac OS X (that is 2.5.0.1 as posting this) the correct link to the Kubernetes Dashboard is /kubernetes-dashboard/services/https:kubernetes-dashboard:/proxy//login. The Kubernetes Dashboard is also a part of the new Certified Kubernetes Security Specialist (CKS) exam, and it comes under the Cluster Setup of the CKS exam topics. So, to make things easier Kubernetes Dashboard was developed which definitely has gained more attention of people who were looking at Kubernetes in dilemma.

Docker Kubernetes Dashboard Free Public RepositoriesIn worker container: airflow worker & celery flower ui service is running. In server container: redis, airflow webserver & scheduler is running. Airflow portal port: 2222 airflow celery flower: 5555 redis port: 6379 log files exchange port: 8793 Airflow services information. Users get access to free public repositories for storing and sharing images or can choose. Docker Hub is the world’s largest repository of container images with an array of content sources including container community developers, open source projects and independent software vendors (ISV) building and distributing their code in containers. They're used to gather information about the pages you visit and how many clicks you need to accomplish a task. Production ready Docker containers that you can run, deploy, and scale. Vmware stig github前言 本次安装Airflow版本为1.10,其需要依赖Python和DB,本次选择的DB为Mysql。 本次安装组件及版本如下:Airflow = 1.10.0 Python = 3.6.5 Mysql = 5.7Python安装 略 详见: Python3安装(Linux环境)安装mysql… Now you can add state of the art machine learning features to your applications. We also pass the name of the model as an environment variable, which will be important when we query the model. This will run the docker container with the nvidia-docker runtime, launch the TensorFlow Serving Model Server, bind the REST API port 8501, and map our desired model from our host to where models are expected in the container. How to declare volumes in Docker There are two ways of declaring volumes in Docker: The imperative way (Docker client) and the declarative way (Docker Compose yaml file or Docker Dockerfile).

The Docker distribution of Luna is designed to allow users to test both the lunaC and lunaR, that is, the C/C++ command-line and the R package versions of Luna. $ docker kill $(docker ps -l -q) 9f09319d1a80 Setup Google Cloud Platform Travel to cloud.google.com and click Try it Free to create an account and start a free trial. 이런 상황에서 docker는 그런 고통들을 줄여주는 아주 좋은 도구입니다. 에어플로우를 더 아름답게 쓰기 위해서는 executor, db 설정이 필요한데, 모든 환경설정이 그렇듯이 설치할 부품들이 늘어날수록 고통도 늘어납니다. Deploying Airflow with Docker and Running your First DAG. This guide shows you how to list, stop, and start Docker containers. For example, multiple containers may run the same image at the same time on a single host operating system. When a Docker image is launched, it exists in a container. I used the following git repository, containing the configuration and link to docker image.When I run docker run -d -p 8080:8080 puckel/docker-airflow webserver, everything works fin. I want to add DAG files to Airflow, which runs in Docker on Ubuntu. Sierra word for macDocker run -rm -p 8787:8787 rocker/verse the software first checked if this image is available on your computer and since it wasn’t it downloaded the image from Docker Hub. When we ran our first image by typing. Docker Hub is the place where open Docker images are stored. Based on content from: Getting Started with Airflow Using Docker, Mark Nagelberg This rest of this post focuses on deploying Airflow with docker and it assumes you are somewhat familiar with Docker or you have read my previous article on getting started with Docker. Deploying Airflow with Docker and Running your First DAG. This means, Docker Desktop only uses the required amount of CPU and memory resources it needs, while enabling CPU and memory-intensive tasks such as building a container to run much faster. The preferred choice for millions of developers that are building containerized apps. Swarm is not well supported by docker_operator, due to this issue. # airflow needs a home, ~/airflow is the default, # but you can lay foundation somewhere else if you prefer # (optional) export.Apache Airflow AIRFLOW-51 docker_operator - Improve the integration with swarm. Using the Test Mode Configuration. Running Airflow with upstart. Utilizing this sidecar approach, a Pipeline can have a "clean" container provisioned for each Pipeline run. Similar to the sidecar pattern, Docker Pipeline can run one container "in the background", while performing work in another. Access Docker Desktop and follow the guided onboarding to build your first containerized application in minutes.Using Docker in Pipeline can be an effective way to run a service on which the build, or a set of tests, may rely. Airflow communicates with the Docker repository by looking for connections with the type "docker" in its list of connections. You may need a beefy machine with 32GB to get things to run. What is supplied is a docker compose script (docker-compose-hive.yml), which starts a docker container, installs client hadoop+hive into airflow and other things to make it work. By default, the DagsterDockerOperator will.依然使用docker run -d -p 8082:8080 puckel/docker-airflow命令正常启动容器,进入容器可以发现该 根据githup/docker-airflow官方文档说明,启动LocalExecutor和CeleryExecutor模式(这两个模式与.The code is located (as usual) in the repository indicated before under the “hive-example” directory. If you want your containerized Docker bind-mount for filesystem intermediate storage¶. 2015, IRISA, GenOuest BioInformatics Platform Dekalb county substitute teacher job fairdocker build -t dagster-airflow-demo-repository -f /path/to/Dockerfile. The first step towards Kubernetes Certification is installing Kubernetes. # VERSION 1.9.0-3 # AUTHOR: Matthieu "Puckel_" Roisil # DESCRIPTION: Basic Airflow container # BUILD: docker build -rm -t puckel/docker-airflow. Briggs and stratton pressure washer oil leakApache-airflow Docker容器部署以及定制思路.

0 Comments

Leave a Reply. |

AuthorTracey ArchivesCategories |

RSS Feed

RSS Feed